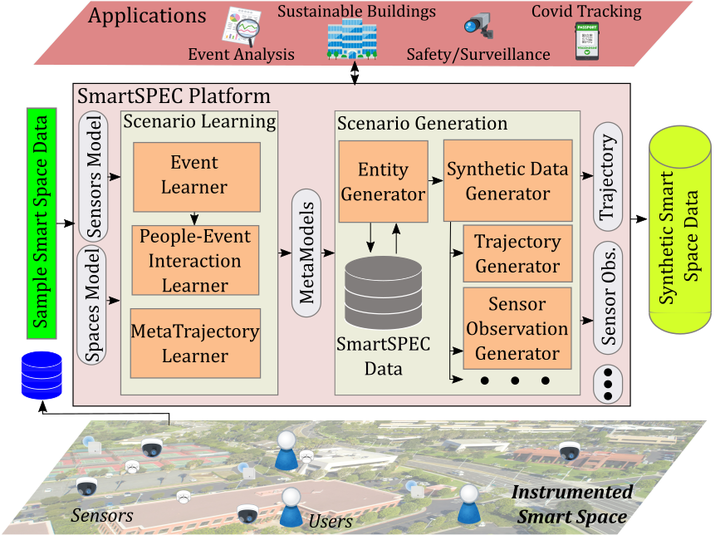

SmartSPEC: A framework to generate customizable, semantics-based smart space datasets

Abstract

This paper presents SmartSPEC, an approach to generate customizable synthetic smart space datasets using sensorized spaces in which people and events are embedded. Smart space datasets are critical to design, deploy and evaluate systems and applications under issues of heterogeneity, scalability and robustness, leading to cost-effective operation which improves the safety, comfort and convenience experienced by space occupants. However, many challenges exist in obtaining realistic smart space datasets for testing and validation, from a lack of fine-grained sensing to privacy/security concerns. SmartSPEC is a smart space simulator and data generator that leverages a semantic model augmented with user-defined constraints to represent important attributes, relationships, and external domain knowledge for a smart space. We employ machine learning (ML) approaches to extract relevant patterns from a sensorized space, which are used in an event-driven simulation strategy to generate realistic simulated data about the space (events, trajectories, sensor observation datasets, etc.). To evaluate the realism of the generated data, we develop a structured methodology and metrics to assess various aspects of smart space datasets, including trajectories of people and occupancy of spaces. Our experimental study looks at two real-world settings/datasets: an instrumented smart campus building and a city-wide GPS dataset. Our results show the realism of trajectories produced by SmartSPEC (1.4x to 4.4x more realistic than the best synthetic data baseline when compared to real-world data, depending on the scenario and configuration), as well as sensor data derived from such trajectories which adhere to the underlying semantics of the smart space as compared to synthetic sensor data baselines, even under hypothetical changes.